Visualizing Results

What if you want to share results of flows with human beings or inspect results by yourself? One option is to use Jupyter notebooks and Metaflow Client API, which is a good combination for ad-hoc analysis and exploration.

If you have a good idea what information you want to observe in every execution, it is more convenient to produce a relevant report automatically. Metaflow comes with a built-in mechanism to create and view such reports with a few lines of code, called cards. These cards can contain any images, text, and tables which help you observe the flow.

As of Metaflow 2.11, cards can update live while tasks are executing, so you can use them to monitor progress and visualize intermediate results of running tasks.

What are cards?

To get an idea of how cards can make an ML project observable, take a look at the following short video:

Here are some illustrative use cases that cards are a good fit for:

- Creating a report of model performance or data quality every time a task executes, for instance, every time a new model is trained.

- Monitoring progress of a long-running task.

- Sharing human-readable results with non-technical stakeholders.

- Debugging task behavior by attaching a suitable card to the flow during development.

- Experiment tracking: comparing results across multiple runs

In contrast, cards are not meant for building complex, interactive dashboards or for ad-hoc exploration that is a spot-on use case for notebooks. If you are curious, you can read more about the motivation for cards in the original release blog post and the announcement post for updating cards.

How to use cards?

This short video (no sound) shows cards from the developer point of view:

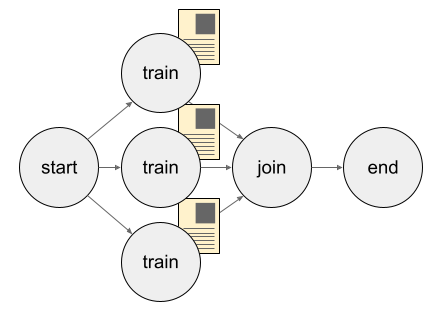

You can attach cards in any Metaflow step. When a task corresponding to the step

finishes, an additional piece of code is executed which creates an HTML file visualizing

the results of the task. In the illustration below, the train step trains three models

in parallel by using foreach. The step is decorated with

the @card decorator, so it produces a human-readable report alongside its usual

programmatic results.

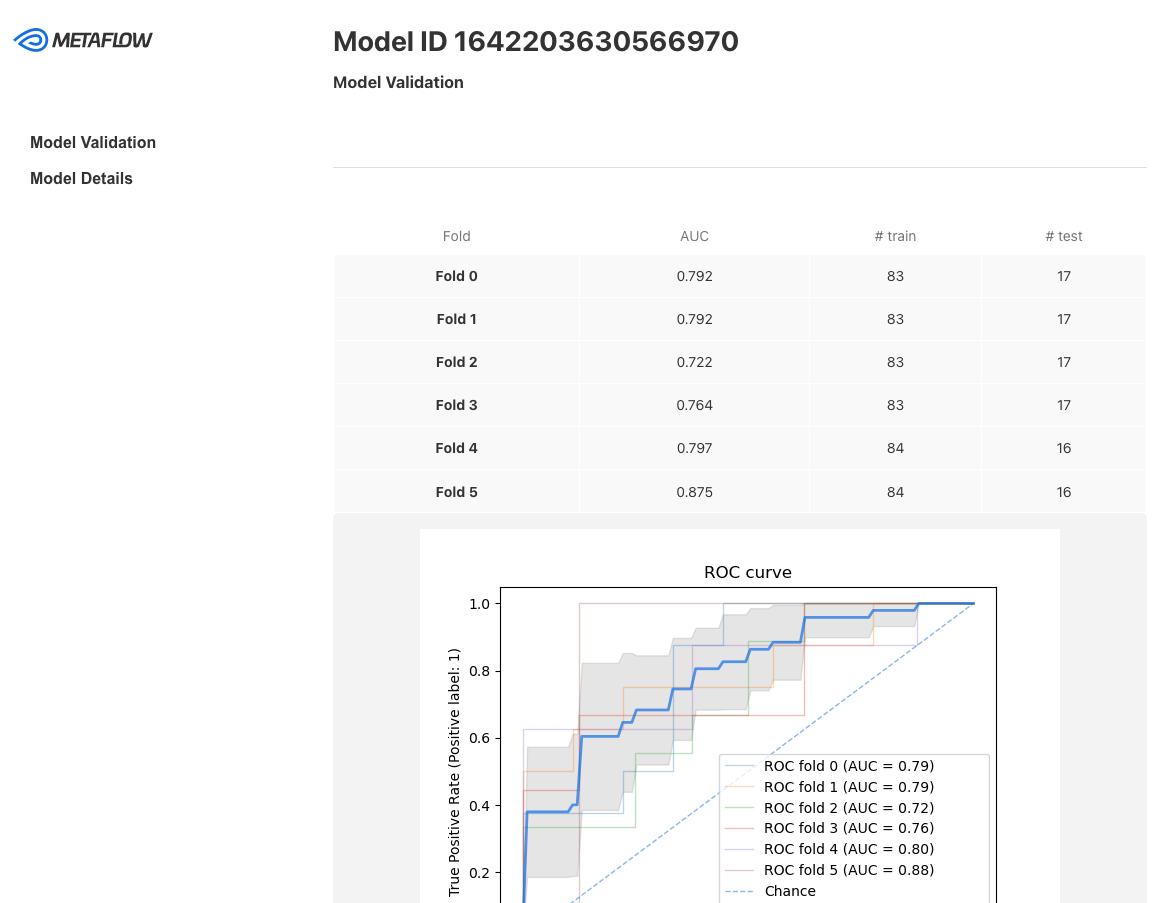

Each model could be accompanied by a card showing model validation metrics. For instance, you can easily customize the cards to look something like this:

Note that cards don’t change the behavior of the workflow in any way. They are created and stored independent of the flow or task. Should something fail during the creation of a card, the execution of the workflow is not affected, which makes cards safe to use even in sensitive production deployments.

How to view cards

Currently, there are three main mechanisms for viewing cards, which are discussed in detail below:

- You can use the Metaflow CLI on the command line to view cards in a browser.

- You can use the

get_cardsAPI to access cards programmatically, e.g. in a notebook. - If you have the Metaflow Monitoring GUI deployed, cards will automatically show in the task page, as shown in the video above.

Also, crucially, cards work in any compute environment such as laptops, any remote tasks, or when the flow is scheduled to run automatically. Hence, you can use cards to inspect and debug results during prototyping, as well as monitor the quality of production runs.

Start developing cards

You can customize the content of cards as much or as little as you want: You can attach a Default Card to any existing workflow without changing anything in the code. Or, with a few lines of Python, you can create a card with custom content by using built-in Card Components. With a few additional lines, you can update cards in real-time during task execution.

If you need even more flexibility, you can find or create a Card Template to output a report formatted with arbitrary HTML and Javascript.

Learn more about these approaches in the following subsections:

- Effortless Task Inspection with Default Cards

- Easy Custom Reports with Card Components

- Updating Cards During Task Execution

- Advanced, Shareable Cards with Card Templates

If you are unsure, start with the Default Cards which explains the basics of card usage. For technical details, see the API reference that contains a complete guide of all card APIs.

You can test example cards interactively in Metaflow Sandbox, conveniently in the browser.